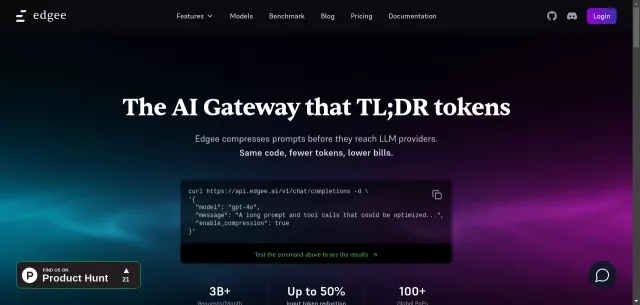

Edgee Review

Welcome to Edgee, the AI Gateway that doesn’t just manage your LLM traffic – it literally TL;DRs tokens before they even hit your provider. Imagine cutting your LLM costs by up to 50% without changing your application code. Edgee makes this a reality through edge-native token compression, offering a single OpenAI-compatible API for over 200 models, intelligent routing, and instant ROI.

Edgee’s Core Capabilities

Edgee isn’t just a proxy; it’s an intelligent layer designed to optimize every facet of your AI operations. Here’s what sets it apart:

- Intelligent Token Compression: Reduce prompt size by up to 50% by removing redundancy while preserving semantic meaning. This means lower bills and faster responses, especially for complex RAG pipelines or multi-turn agents.

- Universal LLM Compatibility: Connect to any major LLM provider – OpenAI, Anthropic, Gemini, xAI, Mistral – all through one unified API.

- Robust Cost Governance: Tag requests with custom metadata to track usage by feature, team, or project. Set up alerts to catch cost spikes before they become a problem.

- Smart Routing & Observability: Edgee intelligently routes requests to the best provider for the job, normalizes responses, and gives you end-to-end visibility into latency, errors, and costs across your entire AI stack.

- Edge Models & Tools: Deploy private, serverless open-source LLMs or run small, fast models at the edge for tasks like classification or redaction, reducing latency and cost.

The Edgee Advantage for Developers

Edgee empowers teams to build and scale AI applications with unparalleled efficiency and control. It addresses critical pain points for anyone working with LLMs:

- Significant Cost Reduction: Directly impact your budget by minimizing token usage without compromising output quality.

- Streamlined Provider Management: Simplify your architecture with a single API for all your LLM needs, enabling seamless switching between providers.

- Enhanced Performance & Reliability: Benefit from optimized prompt delivery and robust monitoring tools for stable, high-performing AI features.

- Rapid Innovation: Ship AI features faster and with greater confidence, knowing your infrastructure is optimized and controlled.

| Key Metric | Impact |

|---|---|

| Input Token Reduction | Up to 50% |

| Models Supported | 200+ |

| Global PoPs | 100+ |

| Monthly Requests Handled | 3B+ |

Top Alternatives to Edgee

Let’s explore and discover the best alternatives and similar tools to Edgee, carefully selected and ranked based on functionality, reliability, and user experience.